You spent 3 hours fact-checking.

Was it worth it?

Hyperthesis runs deep research with verified citations, structured synthesis, and a living knowledge library. Research you can actually use — and defend.

Hyperthesis runs deep research with verified citations, structured synthesis, and a living knowledge library. Research you can actually use — and defend.

We analyzed 400+ forum threads, research papers, and professional surveys. The same six problems came up every time.

Between 3% and 13% of citations in deep research agents don't exist. URLs that look real, papers never written, statistics from nowhere.

AI research tools sound authoritative regardless of accuracy. Executives make real business decisions on content that sounds right but isn't.

5–6 ChatGPT tabs, 2–3 Claude tabs, Gemini, Notion. Every session starts from scratch. The breakthrough from 2 weeks ago is gone forever.

No persistent memory. No shared library. Re-explaining context costs 10–15 minutes per switch. Past breakthroughs are lost.

Wide summaries, not deep analysis. Can't weight sources by recency or authority. Confuses blog posts with peer-reviewed research.

Consultants can't deliver it to clients. Journalists can't publish it. Academics face retractions. No audit trail to defend a position.

The way an expert analyst would — systematically, with primary sources, every claim verified before it reaches you.

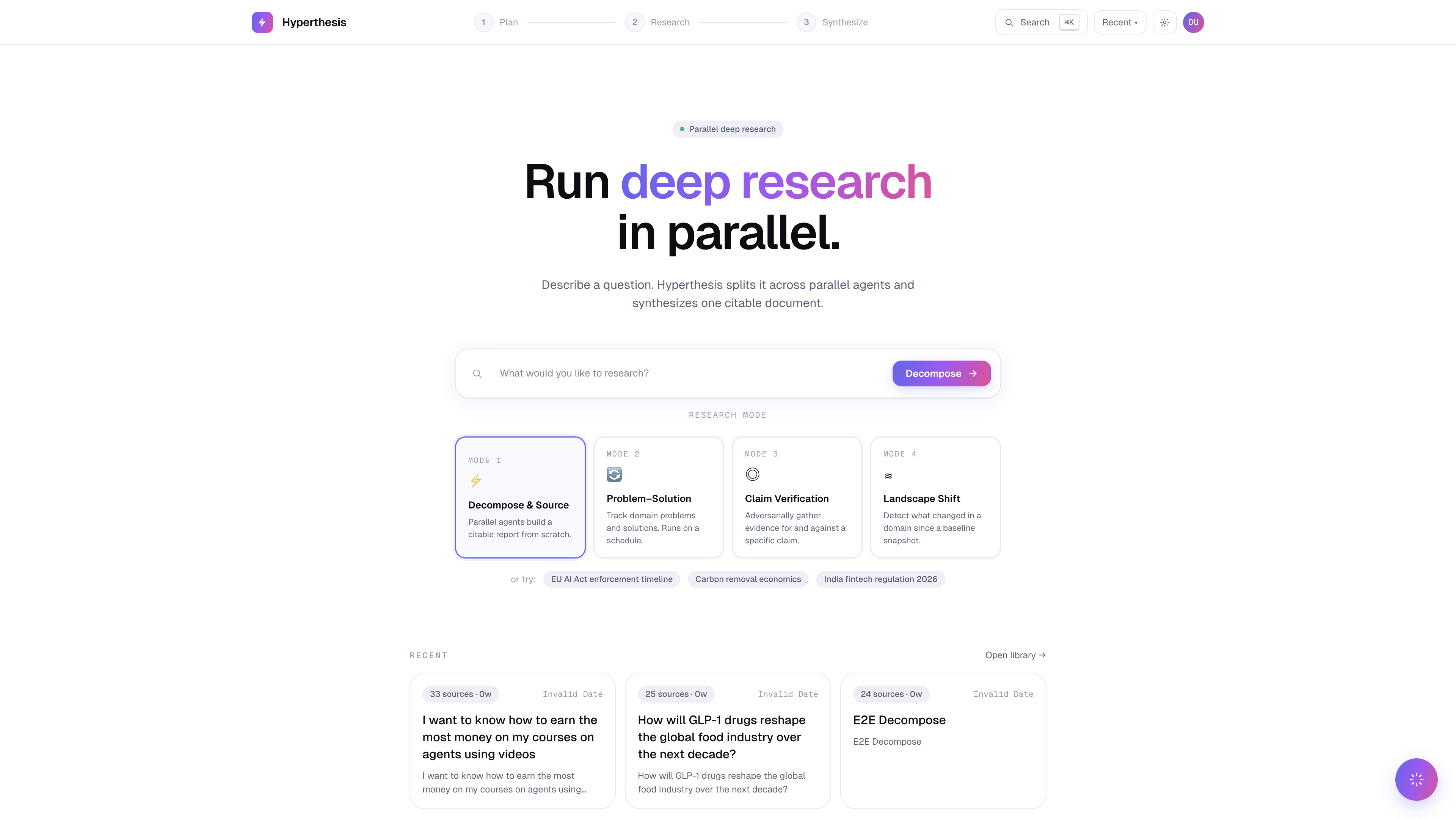

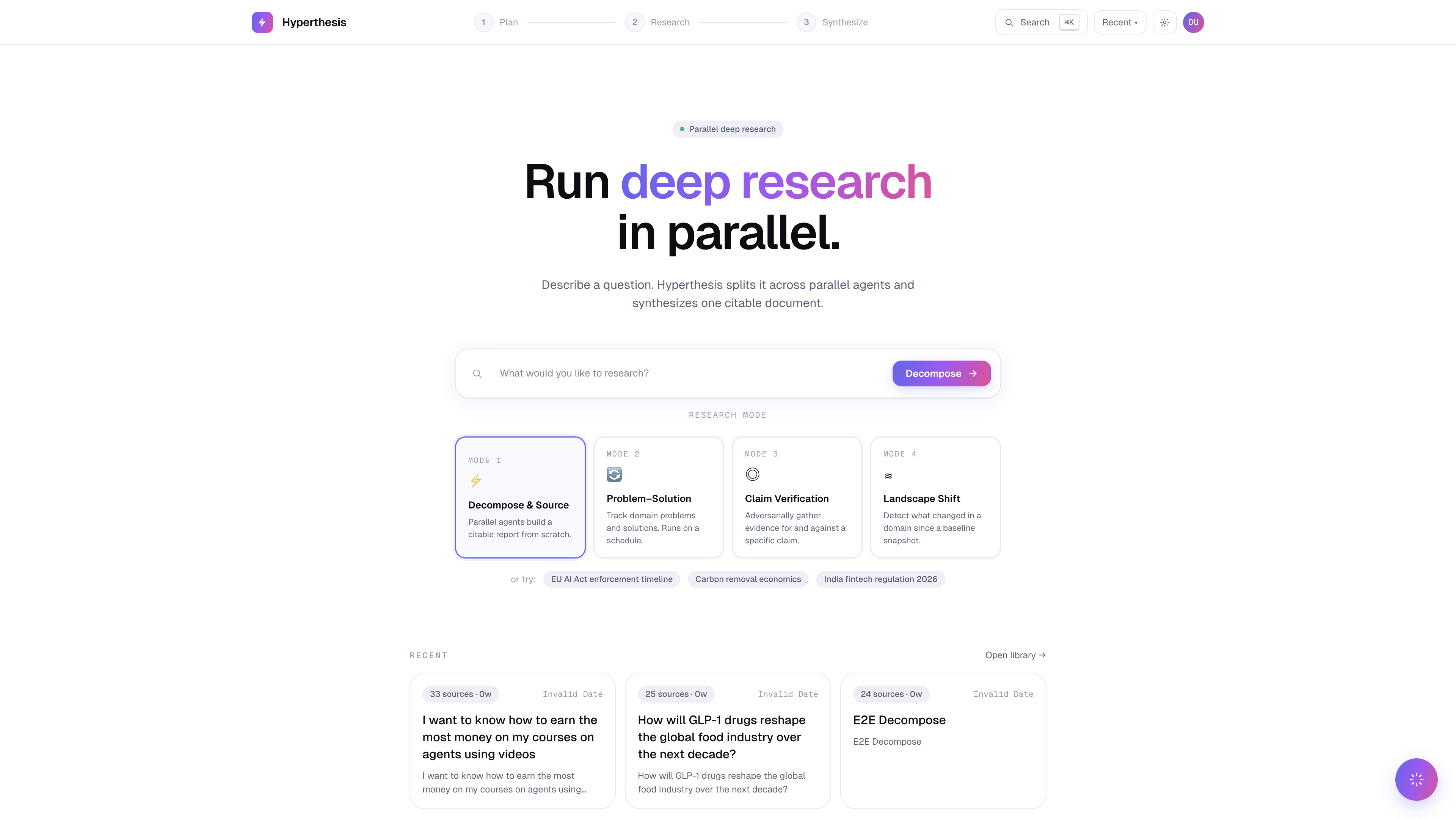

Paste your research question or brief. Hyperthesis scores and structures it into a formal strategy before a single query runs — eliminating "garbage in, garbage out."

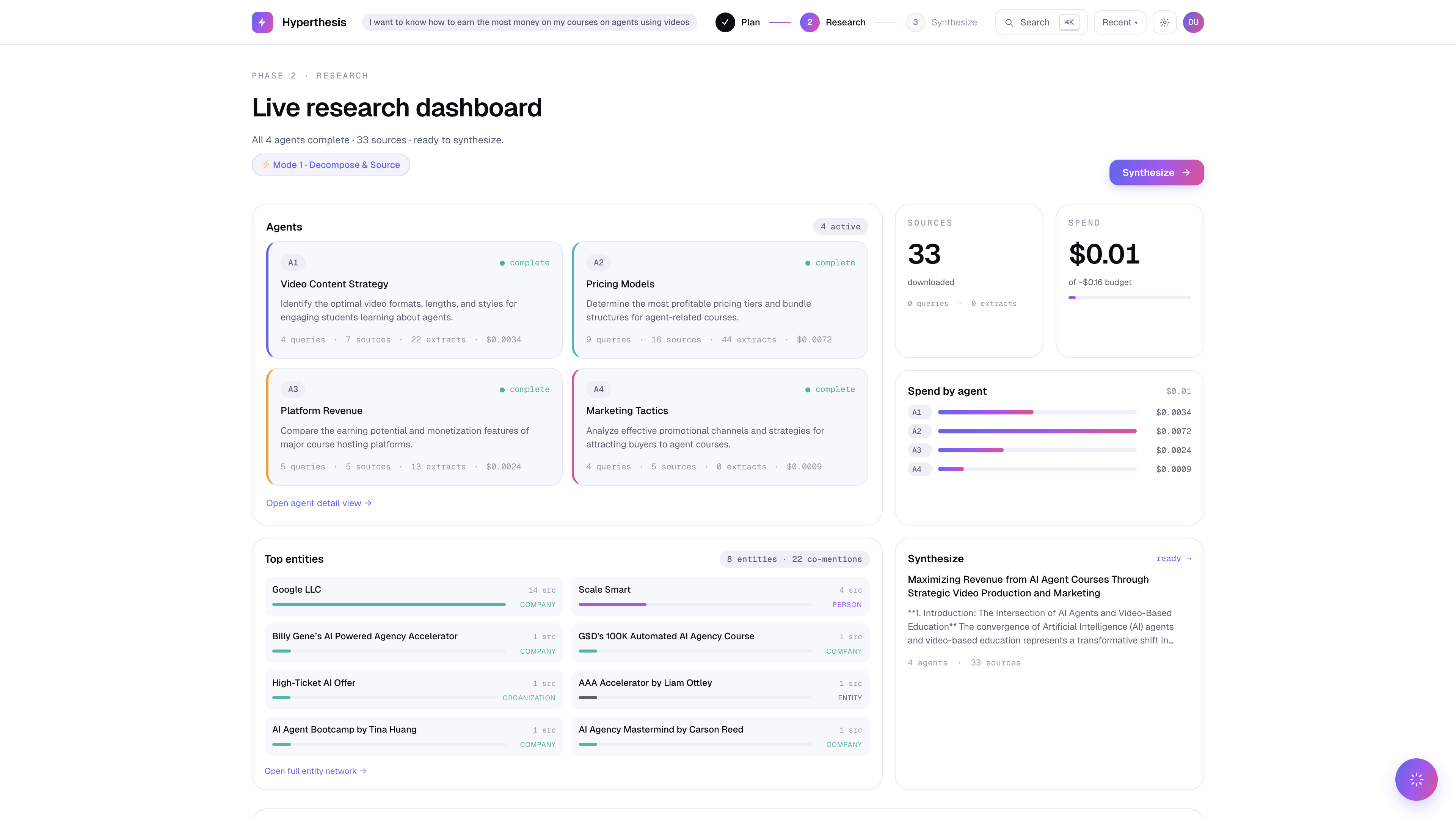

The agent decomposes your question into entities and sub-questions. Breadth first, then depth — targeting specific gaps. Stops when it has enough.

Every URL checked for liveness. Every claim mapped to its source. Source quality scored by domain authority, recency, and type.

Output formatted for your deliverable — analyst report, literature review, competitive briefing. Every finding linked. Every section auditable.

Different research questions need different strategies.

Broad research explored systematically. Breadth first, then depth. Full structured report with entity graph and inline citations.

Monitor a domain over time. Maintains a living registry of open problems, candidate solutions, and validation states.

Verify a specific assertion before it goes into a deliverable. Returns a verdict with full evidence chain.

Structured analysis of competing products, companies, or approaches. Tracks each against defined criteria.

We ran the same research question through all four tools.

| Capability | Hyperthesis | ChatGPT | Perplexity | Gemini |

|---|---|---|---|---|

| Citation URL verification | ✓ Live, every source | Partial | Partial | ✗ |

| Claim-to-source mapping | ✓ Every claim linked | ✗ List at end | ✓ Inline | ✗ |

| Source quality scoring | ✓ Authority + recency | ✗ | ✗ | ✗ |

| Research memory / library | ✓ Persistent, searchable | Partial | ✗ | ✗ |

| Multiple research modes | ✓ 4 modes | ✗ | ✗ | ✗ |

| Team knowledge sharing | ✓ Shared library | ✗ | ✗ | ✗ |

| Entity graph | ✓ Full map | ✗ | ✗ | ✗ |

"Turn a 10-hour research sprint into a 3-hour deliverable — with every claim sourced."

"Sourced research your editor will let through. Every claim linked to its original source."

"Every citation verified against DOI. Source quality scored. An audit trail any reviewer accepts."

"Stop absorbing research costs. Become a temporary expert in 30 minutes."

Credits never expire. Enterprise pricing available — contact us.

10 free research runs. No card. Verified citations from the first session.

10 free runs · No credit card · Credits never expire